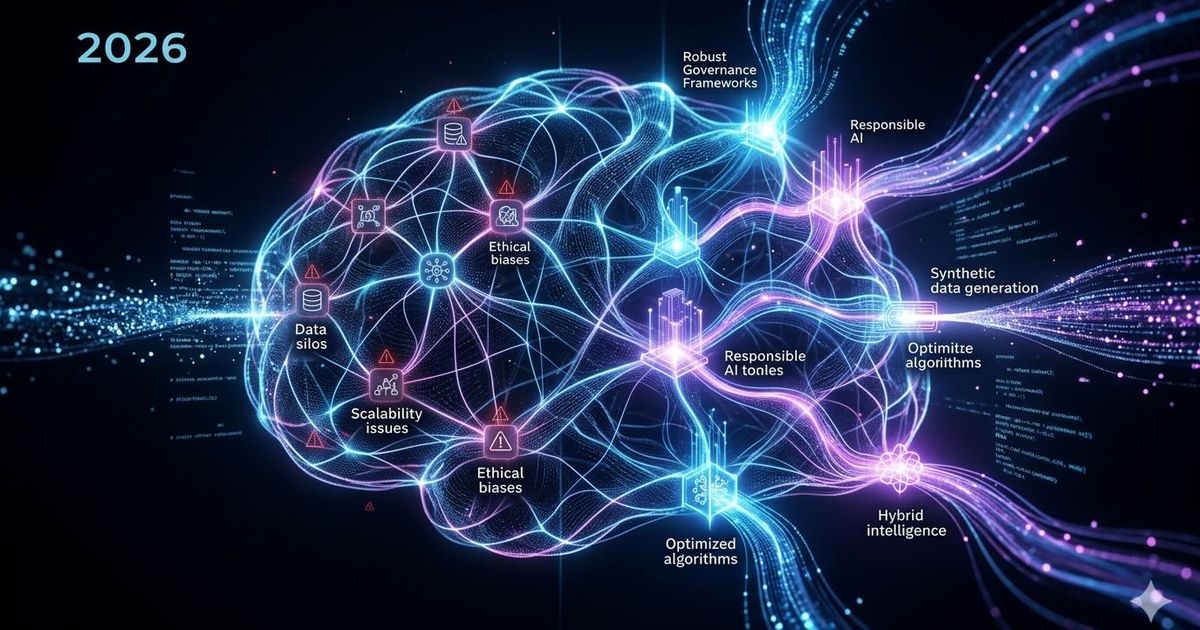

2026 AI Challenges and Solutions

Start feeling the AI Hangover slowly? Trust me, you are not alone. According to MIT’s 2025 report, The GenAI Divide: State of AI in Business 2025, 95% of generative AI pilots fail to deliver measurable business value. Before you assume this is just another AI hate piece, let me stop you right there-it isn't, I promise. My goal in the following article is to give us marketers and executives actual guidance on what it takes to join that 5% success rate. But first, we need a serious reality check.

As of McKinsey’s 2025 Global Survey on AI 88% of organizations use AI in at least one business function, yet scaling remains limited, and value capture is rare.

BCG research consistently shows that about 70% of AI implementation challenges are people- and process-related, 20% technology- and data-related, and only 10% algorithm-related. In 2026, the narrative sharpens further: the hardest problems center on agentic AI governance, cybersecurity, energy constraints, and disciplined strategy, not the models themselves.

Not the models alone, but also the hype and expectations built around them, are the problem. All this is accelerated by the enormous financial pressure that companies like OpenAI face. On a daily basis, we see left-pocket, right-pocket deals between GPU manufacturers, cloud providers, and AI companies.

Fact is, these companies burn cash on a daily basis, and a lot of it. OpenAI and Anthropic are sprinting toward potential IPOs while spending enormous sums to develop their technologies.

Reports from The Wall Street Journal reveal that OpenAI could see its annual costs climb to $85 billion by 2028, aiming for profitability in 2030. Anthropic is operating on a smaller but still expensive scale, expecting its steepest losses in 2026 before potentially turning a profit in 2029, driven by rising corporate revenue.

Tired of reading? We go you!

False expectations and too many AI specialists

“Comment AI SUPER AGENT below and get the ultimate guide to grow your business” - Sounds familiar? Well, surely we all saw one of these LinkedIn Posts within the last 24 hours, right?

So many AI specialists out there suddenly.

Last year, they were Crypto specialists. A bizarre world we are living in. Time for some honesty- I am not a data scientist; I am a marketer, acting as the CEO of an AI startup. Does that make me an AI specialist? Absolutely not. On a daily basis, I am learning new things from the AI team that I was not aware of before. Why? Because AI is math, and math is hard. And frankly, I was never good at math and probably never will be, but an honest evaluation of one's capabilities is the first step toward AI adoption.

One AI to rule them all - far from reality

Luckily, in 2026, we finally reached an AI maturity understanding that provides solid evidence that many of the early “LLMs can do almost everything” claims were nothing but hot air. Recent vendor and independent studies show that models can accelerate parts of work, but they still often fail on longer, multi-step, or real-world tasks, and hallucinations remain a meaningful risk in factual work.

Why the AI hype was overstated

A useful reality check also comes from Anthropic's January 2026 Economic Index, which analyzed large samples of Claude usage and found that task success falls as complexity rises. Anthropic reported that Claude's success rate is lower on more advanced tasks, even as speed gains increase, suggesting the model is most helpful as an assistant rather than a fully autonomous worker. In parallel, reporting on Anthropic's analysis said Claude achieves roughly 60% success on API requests within one hour and about 45% on tasks over five hours, which is a far cry from “solves basically anything”.

Real-world failure evidence

Independent write-ups of agent evaluations are even harsher: one reported a 97% failure rate on real remote work tasks, with the best system completing only 2.5% of projects at a professional level. Another summary of real-world business tests said AI agents still achieve goal completion rates below 55% in CRM-style workflows, and a separate account claimed only 3% completion in real freelance tasks. Even allowing for differences in benchmarks, the pattern is consistent: LLMs perform well in narrow, guided situations, but reliability drops sharply once tasks become long, tool-heavy, or operationally messy.

Hallucination and factual limits

For content and analysis work, hallucination is the other major constraint. A 2025 review in the EMNLP proceedings found that hallucination is a persistent issue across languages, and broader survey work in 2025 concludes that LLMs still generate outputs that sound good but are factually wrong. That matters because many business tasks require exactness, not just plausible prose, so even a small error rate can undermine trust in data analysis, reporting, legal summaries, or customer-facing content.

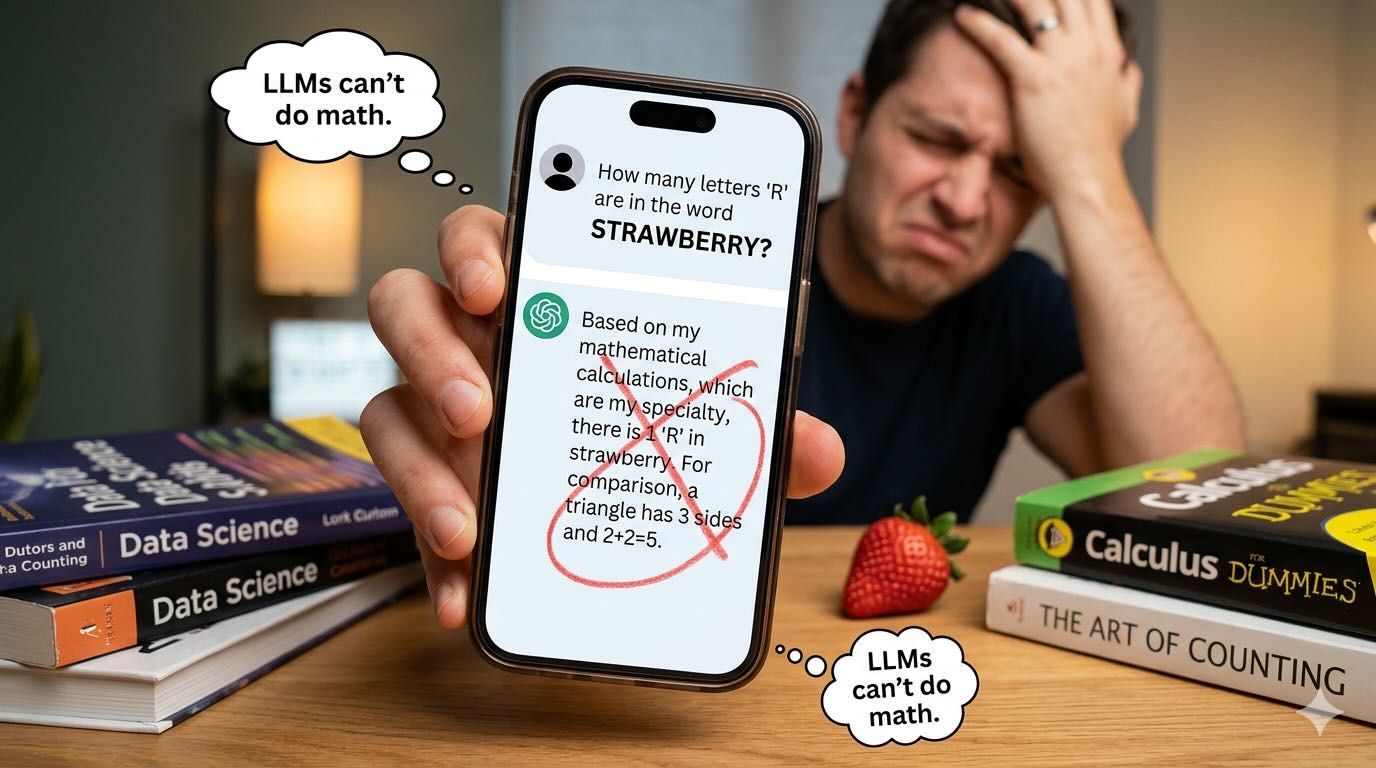

LLMs can do math, stupid! It runs Python in the back

We all know the old stories of asking an LLM how many Rs are in the word strawberry and the answers were absurd. If you run that question now, you will get the right answer. This is not because the LLMs have learned math by now, but have learned that fact through feedback loops over the past years.

Let's be clear: LLMs cannot do math. Expecting to upload your company data and receive validated, data-grounded answers is simply absurd. Some will push back and say this isn't true - that LLMs run Python in the background and therefore can do math. Fair enough. But what does that actually mean in practice? Having access to a tool that can do math is not the same as knowing how to use it correctly.

A personal example: I'm not a mathematician. Handing me a calculator doesn't mean I understand what I'm computing. The same logic applies to LLMs.

When you ask an LLM a mathematical question, it does what it always does - it predicts the next most probable token. Nothing more. That means we have no visibility into which weights or internal decisions are being used to instruct Python. And if we don't know that, we still don't know what's being hallucinated.

Data Scientists are scarce

AI is difficult to scale in companies because the talent needed to build, validate, and operationalize it is still scarce. In the U.S., data scientist employment is projected to grow 34% from 2024 to 2034, with about 23,400 openings per year, according to the Bureau of Labor Statistics BLS data scientist outlook.

That shortage is part of a broader AI skills gap: IDC-backed reporting says over 90% of global enterprises are expected to face critical skills shortages this year, and those gaps could cost the global economy up to $5.5 trillion through delays, missed revenue, and lower competitiveness

IDC skills gap analysis. PwC's 2025 Global AI Jobs Barometer also found that AI-skilled workers command a 56% wage premium, which shows how aggressively companies are competing for the same limited talent pool.

Corporate AI from hell

Having AI tools at your fingertips doesn't mean your company is prepared to use them. While the global conversation around AI often centers on Silicon Valley giants and Fortune 500s, a shift is happening in the middle of the market. Recent data suggest that medium-sized companies (100 to 1,000 employees), are uniquely positioned to outpace both small startups and massive enterprises in AI integration. This phenomenon is driven by a combination of agility and technical readiness.

I am Legend, and it sucks

Unlike large-scale corporations, medium-sized firms are rarely burdened by "messed up" legacy systems that require multi-year modernization efforts before AI can even be discussed. This MIT Sloan research explains why large firms often experience an initial productivity dip when integrating new tech with legacy systems. Conversely, unlike small startups, they possess the capital and data volume necessary to train or fine-tune models effectively. This "sweet spot" allows for a faster transition from experimental pilots to core operational shifts.

The Small Business Administration (SBA) emphasizes that medium-sized firms often possess a "data readiness" advantage because they are built on modern, cloud-first foundations rather than fragmented, decades-old databases. These companies typically maintain more cohesive data ecosystems that allow AI tools to be plugged in and utilized immediately. Unlike massive conglomerates that must spend years reconciling incompatible data formats across various global departments, mid-sized businesses can leverage their cleaner data.

AI sits on top! - On top of what exactly, dude?

Ok, we understand smaller companies are more agile, less hierarchical, and less siloed. But large enterprises can't just ignore AI, right? So what do they usually do? They place AI ‘on top’.

Attempting to layer AI directly onto legacy systems is a common trap that mirrors a historical mistake from the first Industrial Revolution. During the transition to steam power, many factory owners initially tried to simply place a steam engine next to their manual looms, treating the new technology as a peripheral add-on. This approach failed because the existing workflows were never designed for that level of power and speed. True transformation only occurred when entrepreneurs redesigned the entire factory structure, installing central drive shafts and reorganizing layouts to place the energy source at the absolute center of operations.

In the same way, modern businesses often treat AI as a "bolt-on" tool for specific departments, which only reinforces existing silos and technical debt. To capture the full value of the technology, companies must move away from these "island solutions" and instead rebuild their data infrastructure and organizational workflows around AI as the central engine of the business, ensuring that departments like IT, marketing, and legal operate from a unified foundation rather than in a state of constant friction.

Stuck in corporate lobbyism

From our experience, it is not just the legacy systems that prevent AI adoption but also legacy partnerships. Especially large corporations have long-term partnerships with one of the giants, whether it's Google or Microsoft. If the organization uses Microsoft desktops and cloud computing, the chances are high that the entire organization will be forced into ChatGPT or worse, Copilot, which integrates GPT and starts leaking your business information. Starting to sweat already? Let us make it worse read here how a single click can trick copilot into leaking your info. Spoiler, you dont need to be a hacker. Conversely, your company will not choose the best tool but the one with the biggest lobby in your company.

Incentivise AI BS

As stated before, incentives are the new marketing tool for Microsoft and others to push their models in larger corporations. They approach their clients with incentive ideas to encourage the adoption of AI.

Here is a real-life example from one of our clients. Microsoft is their partner for a long time when it comes to computing infrastructure. Microsoft pushed towards an incentive project where different departments compete against each other, and the one that uses the most tokens wins.

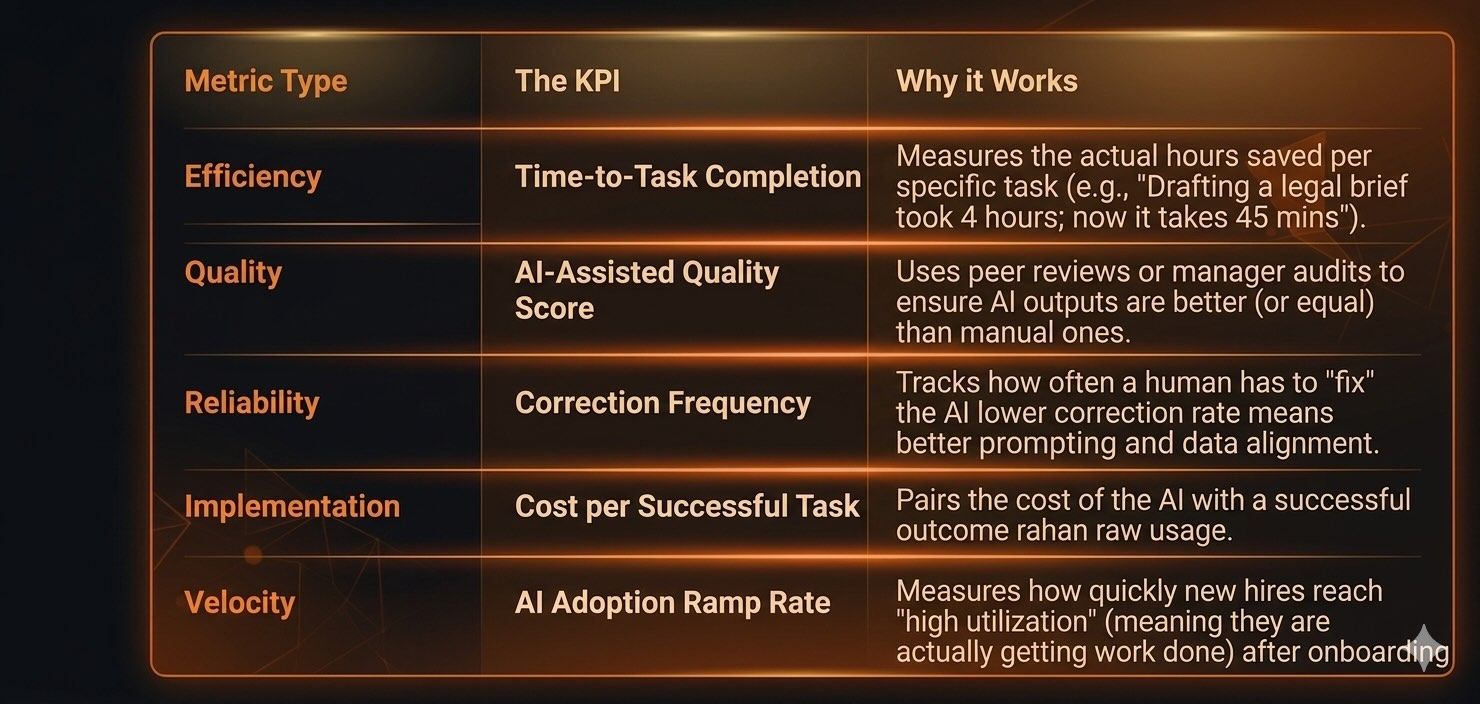

Yes, the KPI was token usage, meaning cost production, meaning just doing something to spend tokens. No ROI KPIs, no efficiency KPI, no time KPIs. In other words, measuring AI success through "tokens spent" is the digital equivalent of measuring a writer's talent by how much ink they use. It rewards volume over value, creating a dangerous cycle of "Token Maxing."

And it fuels the hardware cloud computing bubble we currently see everywhere.

Measuring AI success through token consumption is fundamentally flawed because it encourages "Delegation Theater," where employees perform trivial tasks, like obsessively rephrasing minor internal messages, simply to pad their usage statistics. This metric actively penalizes efficiency by making a skilled user who achieves a perfect result in 100 tokens appear less productive than an amateur who wastes 5,000 tokens through aimless trial and error. Because every token carries a direct dollar cost, this approach effectively monetizes inefficiency, incentivizing staff to inflate operating expenses without any guarantee of increased output.

Ultimately, this triggers the Hawthorne Effect: when employees know they are being judged by a hollow metric, they shift their focus toward optimizing that number rather than delivering meaningful business value, turning the technology into a performative chore rather than a strategic asset.

Remember when Social Media Success was measured with “Likes”? I will just leave this here as a thought.

I got all the choices, you got all my data

If you are not working for a corporation, you might feel a certain relief for a good reason. The chances that AI will boost your business are enormous, but it also comes with risks.

In smaller companies, you usually do not have lengthy compliance and data regulations or legacy relationships that prevent you from choosing the right tools. So how do you usually choose the right tool? Correct, you test it. In order to really test the capabilities of an LLM, you need to feed it with data. And since resources are often scarce, it happens all the time that sensitive information is uploaded to free versions of these LLMs that state that they will use data to train their models unless u have a corporate account with them. Do you or your employees all have a corporate account? I doubt it, which means your data is out in the wild.

As a matter of fact, this is a problem that corporates see too. Recent studies amplify this. Harmonic Security highlights a massive imbalance in enterprise AI risks, revealing that just six applications trigger over 90% of potential data exposures while ChatGPT is the primary driver-accounting for more than 70% of these incidents.

A new report from TELUS Digital reveals a significant "shadow AI" trend, with 57% of enterprise workers admitting they've shared confidential company data with public AI tools. The survey, which focused on large organizations, found that nearly 70% of employees use personal accounts for work tasks, often bypassing official IT channels to boost their efficiency. This data leak includes everything from project prototypes and customer logs to sensitive financial projections. While many employees realize their companies have restrictive policies, a lack of consistent training and enforcement has created a gap where 84% of workers intend to keep using these assistants regardless of the risk.

Instead of total bans, the findings suggest focusing governance on the "big six" while using precise data controls to manage the hundreds of smaller, specialized AI tools that continue to enter the workplace.

Final comment- do your homework!

So, where does this leave us? Somewhere between a Silicon Valley fever dream and a corporate nightmare involving spreadsheets that hallucinate your quarterly earnings. We are currently living through the "Token Maxing" time, a bizarre period of history where companies are literally rewarding employees for being inefficient, provided they burn enough expensive server heat to do it. It is the digital equivalent of a participation trophy, except the trophy costs $80 billion in R&D and occasionally insists that a strawberry has two Rs.

The reality is that we've been sold a "god-in-a-box" that is actually more like a very fast, very confident intern who is prone to lying and has a serious gambling problem with your company's private data. If you are a marketer or an executive, the "5% success rate" doesn't come from finding a magical prompt; it comes from realizing that math is still hard, talent is still expensive, and bolting a jet engine onto a horse-drawn carriage usually just results in a very fast, very dead horse.

Stop playing "Delegation Theater." Stop measuring success by how much ink you can waste. If your AI strategy is currently just a "left-pocket, right-pocket" deal designed to make a GPU manufacturer happy, you aren't an innovator-you're a line item in someone else's IPO prospectus. It is time to stop asking the AI to do your job and start doing the hard work of fixing your data, training your people, and maybe-just maybe-counting the Rs in "strawberry" yourself.

If you are interested in the AI infrastructure challenges, we have the article here for you!